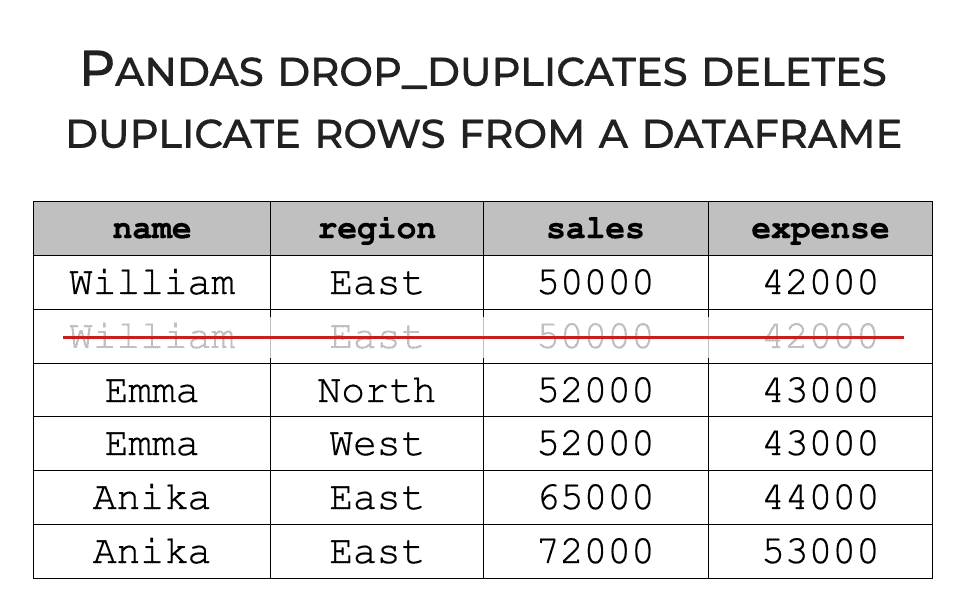

So for example, if two rows had the same value for every column, we’d consider those to be duplicate rows. Importantly, it’s possible for a dataframe to have duplicate rows of data. It’s Possible to Have Rows with the Exact Same Data If you’ve ever used Excel, a Pandas dataframe is really a lot like an Excel spreadsheet, in the sense that they both have this row-and-column structure. For example, if you had a dataframe with sales data, the individual rows might record the sales information for individual people. Typically, the columns of a dataframe are variables, and the rows typically record individual data records. Pandas dataframes store data in a row-and-column format. Let’s look more carefully at dataframe structure. We use dataframes to store certain types of data, and we use Pandas techniques to manipulate dataframe data. (Remember: the drop duplicates method operates on Pandas dataframes.)Ī dataframe is a data structure in Python that’s available in the Pandas package. Dataframes Store Python Dataįirst, let’s quickly review what a dataframe is. This will give you some context, and help you understand exactly what this technique does, and why we might use it. I’ll show you some examples of this in the examples section, but first, I want to quickly review some fundamentals about Pandas and Pandas dataframes. Stated simply, the Pandas drop duplicates method removes duplicate rows from a Pandas dataframe. Having said that, if you really want to know how this technique works, you should probably read the whole tutorial.Īn Introduction to Pandas Drop Duplicates If you need something specific, you can click on one of the links above. Examples: How to drop duplicate rows from a dataframe.An Introduction to Pandas Drop Duplicates.You can click on any of the following links, and it will take you to the appropriate section in the tutorial. The tutorial will explain what the technique does, explain the syntax, and it will also show you clear examples. You can, of course, also combine this with the keep parameter to determine which duplicates to keep.This tutorial will show you how to use Pandas drop duplicates to remove duplicate rows from a dataframe. For example, if you want to find duplicates based on the species column, you can do the following. If you want to find duplicates based on a single column, you can use the subset parameter. In the default example, duplicated() is looking at the entire row to determine if it is a duplicate. It also considers the first row to be unique, so the first row will always be False, since it doesn’t become a duplicate until the next occurrence is encountered.įind duplicates based on a single column with subset Note that this just returns a series by default, with the numbers of the rows as the index.īy default, duplicated() considers the entire row to be a duplicate if all the values in the row are the same. The default behavior is to return True if the row is a duplicate of a previous row. This method returns a boolean series indicating whether a row is a duplicate. Use duplicated() to return a boolean series indicating whether a row is a duplicateįirst, we’ll look at the duplicated() method.

You will also need to import the pandas package as pd to make it easier to reference later on.ĭata = df = pd. To get started, you will need to open a new Jupyter Notebook and import the pandas package. We’ll handle everything from rows that are completely duplicated (exact duplicates), to rows that include duplicate values in just one column (duplicate keys), and those that include duplicate values in multiple columns (partial duplicates).

In this post, you will learn how to identify duplicate values using the duplicated() method and how to remove them using the drop_duplicates() method. Duplicate keys are rows that contain the same values in one or more columns, but not all columns.Partial duplicates are rows that contain the same values in some columns.Exact duplicates are rows that contain the same values in all columns.There are three main types of data duplication: Not only will you need to be able to identify duplicate values, but you will also need to be able to remove them from your data using a process known as de-duplication or de-duping.

Duplicate values are a common occurrence in data science, and they come in various forms.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed